Archiving Network Flows from vRealize Network Insight to Log Insight

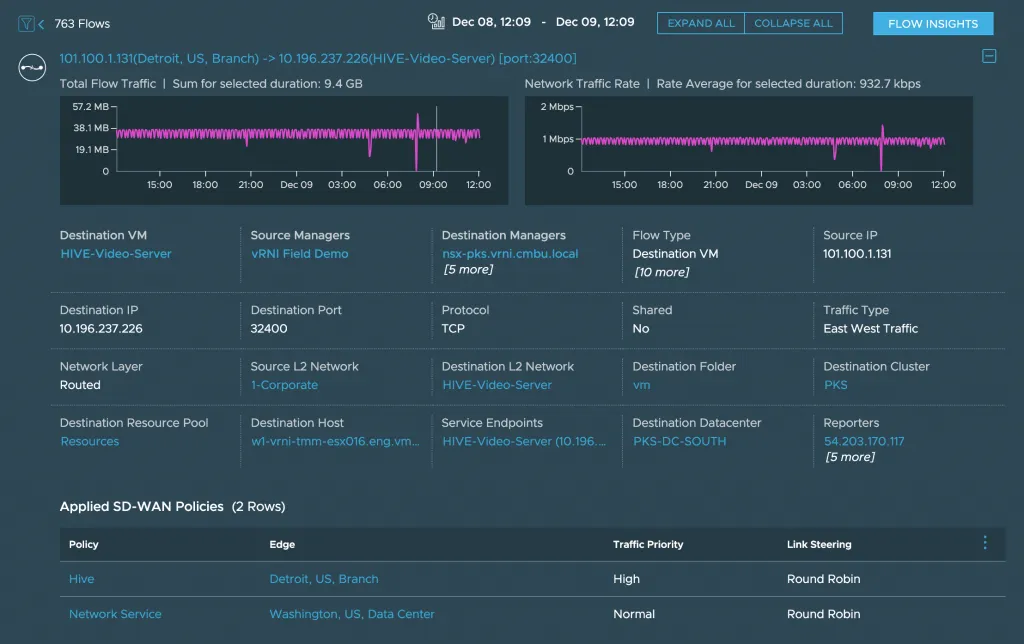

vRealize Network Insight (vRNI) captures all traffic going through the network. It stores the traffic in flow records, and these are made up out of a source, destination, protocol, and port number. The metrics are attached so you can get a nice graph of the traffic behavior.

After creating the flow, vRNI goes on and attaches a lot of context to that flow: Is it coming from a VM? Is there a firewall rule attached? Which vCenter is this flow going through? What kind of SD-WAN Policies are attached? If any of this context changes (i.e., a VM got renamed), it also saves snapshots of the flow with a timestamp, so you can see how that VM was named last week. All of that context, snapshots, and metrics take up space. That’s why currently, flows can only be saved for 1 month in vRNI.

Note: this retention is only for flows that are closed. Connections that happened 2 months ago and never happened again, will be expired. Connections that started 2 months ago and are still actively used, will still be in vRNI.

Why you’d need longer retention

Typically, one month of data for troubleshooting and monitoring is more than fine. But, there are other use cases to extend flow retention. The primary reason seems to be security auditing; being able to tell there was a connection between source x and destination y over port v at a certain date and time. To archive this, there’s no need for the large amount of context and metrics that vRNI adds, so we can reduce the amount of data when archiving the flows into a long-term system.

Archiving Flows to vRealize Log Insight

In a few customer engagements, I used vRealize Log Insight (vRLI) as that long-term system. It holds unstructured data, you can create dashboards around that data, the search features are pretty awesome, and you can archive logs to secondary storage.

We decided to create a script that runs every day and retrieves the flows from the last 24 hours, via the vRNI APIs, and send them to vRLI.

Python & PowerShell Scripts

Different customers wanted different base languages, so this script exists in two forms; one using PowervRNI and another that uses the Python SDK. Both have the same output only on a different platform. I’ll focus on Python, as that seems to be the more popular one.

Installing the Python SDK

The Python SDK is auto generated swagger library and installing it is relatively straight forward:

git clone https://github.com/vmware/network-insight-sdk-python.git

cd network-insight-sdk-python

pip install -r requirements.txtThe best part of downloading the entire repository is that there’s an example directory with a ton of different scripts. Including flows_to_vrli.py.

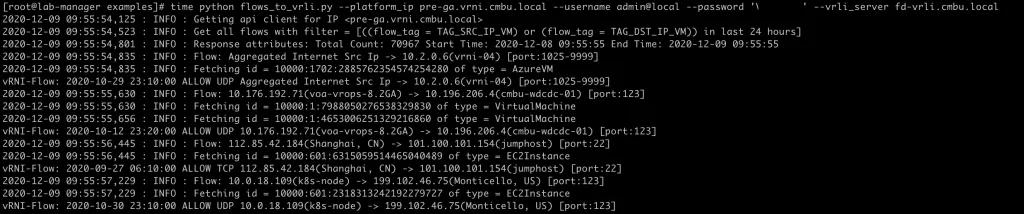

Running flows_to_vrli.py

The vRNI team did a great job with the examples. There are a ton of helper functions that all examples use, meaning everything can be configured with parameters; you don’t need to change the scripts at all. Here’s how to run the flows_to_vrli.py script:

export PYTHONPATH=../swagger_client-py2.7.egg/

python flows_to_vrli.py --platform_ip vrni.local \

--username admin@local --password 'VMware1!' \

--vrli_server fd-vrli.cmbu.localThe first line makes sure the script can find the SDK. The parameters should speak for itself. You’ll need to authenticate with a member or auditor user against vRNI, but not against vRLI. By default, it’ll use the default CFAPI port 9543, but you can change that by adding the parameter —vrli_port.

It’ll look something like this:

Put this in a scheduled task (crontab) to run every day, and your flows will be automatically archived!

Result

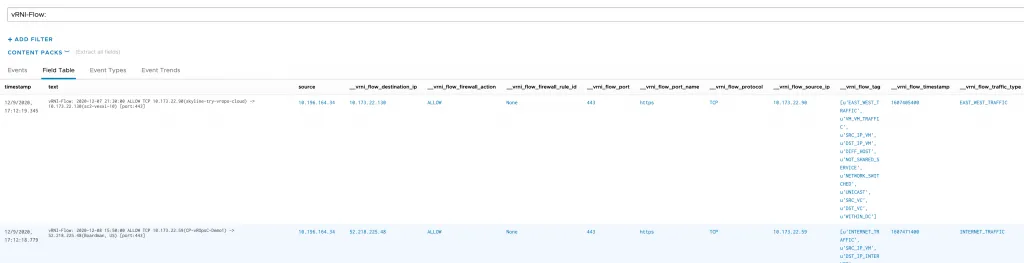

The result in vRLI will be formatted the same as firewall rule hit logs:

vRNI-Flow: 2020-12-07 21:30:00 ALLOW TCP 10.173.22.90(skyline-try-vrops-cloud) -> 10.173.22.130(sc2-vesxi-10) [port:443]You can filter on the source IP, destination IP, protocol, port name, and even the VMs involved. Another great thing about vRLI is that you can attach context to a Syslog entry. Fields, as they’re called. The flows_to_vrli.py script attaches fields with more context from vRNI, which can also be used to filter and search for.

[caption id=“attachment_5393” align=“aligncenter” width=“788”] Click to enlarge[/caption]

Click to enlarge[/caption]

Filtering Flows

There’s one modification you could make to the script to change the filter that determines which flows are returned. Look for CONFIG_FILTER_STRING:

CONFIG_FILTER_STRING = "((flow_tag = TAG_SRC_IP_VM) or (flow_tag = TAG_DST_IP_VM)) in last 24 hours"This is the search query that is run via the API to return the flows. It says that only flows where there’s either a source or destination VM involved (any traffic from VMs), and only the flows that have been active in the last 24 hours should be returned.

If you want to run this script every week, use “in last 7 days”. Or if you want to run it every 4 hours, use “in last 4 hours”. You get it.

I’ve included a bunch of examples inside the script to show you how to configure other filters if needed.

# you can also change the filter to anything the search API supports.

# Below are a few examples for inspiration:

#

# filter on a destination IP address

# CONFIG_FILTER_STRING = "destination_ip.ip_address = '192.168.21.20'"

# filter on a specific network port

# CONFIG_FILTER_STRING = "port = 123"

# retrieve only flows related to this vSphere cluster

# CONFIG_FILTER_STRING = "source_cluster.name = 'HaaS-Cluster-6'"

# filter by a specific network

# CONFIG_FILTER_STRING = "destination_l2_network.name = 'vlan-1014'"

# return only flows with security tag 'OPI' and exclude internet traffic

# CONFIG_FILTER_STRING = "(source_security_tags.name = 'OPI' or destination_security_tags.name='OPI') and (flow_tag != TAG_INTERNET_TRAFFIC)"

# return only internet traffic and from a specific vSphere cluster

# CONFIG_FILTER_STRING = "((flow_tag = TAG_INTERNET_TRAFFIC) and (source_datacenter.name = 'HaaS-1'))"Conclusion

While at some point it would be cool to have this natively in vRealize Network Insight, this script should make it possible to achieve your requirements to save network flow records indefinitely.