By default, the syslog capability in vRealize Network Insight only supports UDP on port 514, sending the messages in cleartext. It's important to have Network Insight send its logs somewhere, though, as they can be useful when troubleshooting Network Insight itself.

To be clear, these logs contain information about the Network Insight platform and collector appliances. Logs on processing incoming data, errors when the collector is unable to connect to a data source (vCenter, switch, NSX, router, etc.). If you're looking for logs on network changes (the network that Network Insight monitors), look at the System and User-Defined Events.

vRealize Log Insight Agent

It's a well-kept secret that both the platform and collector appliances have a vRealize Log Insight agent installed on them. This vRLI agent can be configured via the CLI on the appliances. After configuring the vRLI agent on the appliances, it will start sending the same logs as the syslog configuration, over the vRLI API using HTTPS, so it's encrypted.

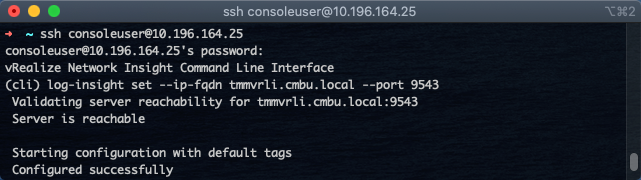

Here's an example on how to configure it:

In the above example, I used tmmvrli.cmbu.local as the vRLI server (can also be an IP instead of a hostname) and port 9543 - which is the HTTPS port for the vRLI API.

Obviously, this requires a vRealize Log Insight instance. If the desired target is another log repository (i.e., Splunk), vRLI can be set up to forward logs that come from Network Insight. That forward can then use secure syslog, to make the chain secure.

April 21, 2021 at 16:09

How do you add a enterprise root ca/chain certificate(s) so that log insight agent on vRNI accepts the enterprise SSL installed in vRLI?

2021-04-21 14:00:37.030272 0x00007777770b3700 SSLVerifyContex:165| Certificate pre-verify error = 19 while trying connect to ‘vrli1.mydomain.com’. self signed certificate in certificate chain

2021-04-21 14:00:37.031128 0x00007777770b3700 CurlConnection:723 | Transport error while trying to connect to ‘vrli1.mydomain.com’: SSL peer certificate or SSH remote key was not OK

2021-04-21 14:00:37.031153 0x00007777770b3700 CFApiTransport:108 | Postponing connection to vrli1.mydomain.com:9543 by 166 sec.

2021-04-21 14:03:23.031249 0x00007777770b3700 CFApiTransport:128 | Re-connecting to server vrli1.mydomain.com:9543

April 28, 2021 at 19:28

That’s not something vRNI can do out of the box, but you can edit the vRLI config (/etc/liagent.ini) on the vRNI appliances to give it the CA. Login with the support user, then sudo to root, edit /etc/liagent.ini and modify `;ssl_ca_path=/etc/pki/tls/certs/ca.pem` then restart the liagentd service. Upgrades and reconfiguring the vRLI settings via the consoleuser will overwrite that change.

April 28, 2021 at 19:46

Thanks. I’ll look into it.

We have a support case with VMware also.. so far not closed, but it seems the “hidden support” of liagent might be going away and normal syslog is the way to go.. But I will await for an official answer too.

April 28, 2021 at 19:52

It’s not going away. The vRLI data source is going away. That’s where vRLI would notify vRNI of NSX security changes via a webhook because vRNI wasn’t polling fast enough yet – which was fixed in vRNI a while back.

April 29, 2021 at 00:31

Ah. I’ve misunderstood then. I’m on vRNI 6.1 (and vRLI 8.1) at the moment on VCF 4.2.

Good to know.

April 30, 2021 at 14:59

Hi Martijn.

So I’ve tested a little more, and found an “interesting” bug of sorts… I’m locked on vRLI v8.1 for the moment due to VCF 4.2, so it might have been fixed in newer versions, but as this is sort of a “hidden gem”, it might not have been noticed.

If we log in to a vRNI proxy or platform node, where we have not touched any liagent configuration, we see the following:

root@vrni-platform-release:/etc# ls -l liagent.ini

lrwxrwxrwx 1 root root 44 Apr 29 12:26 liagent.ini -> //var/lib/loginsight-agent/liagent.ini

So editing /etc/liagent.ini actually edits the liagent.ini in the lib folder, since it’s a symlink. So far so good.

Looking into this file, we will find that there are no settings of what to push to vRLI; there are no [filelog|syslog] or [filelog|audit] settings etc.

This magic, I’ve figured out, comes automatically when you use the consoleuser and the “limited” vRNI CLI to configure like you write in this blogpost:

(cli) log-insight –ip-fqdn –port 9543

With this command entered, the liagent are configured with the correct [filelog|syslog] settings etc. But something strange also happens; the /etc/liagent.ini symlink changes! It now points to another file:

root@vrni-proxy-release:/etc# ls -l liagent.ini

lrwxrwxrwx 1 root root 44 Apr 29 12:26 liagent.ini -> /var/lib/loginsight-agent/liagent_onprem.ini

That shouldn’t be a problem you think, vRNI CLI should have this under control, right? Wrong!

Editing /etc/liagent.ini, which in reality edits /var/lib/loginsight-agent/liagent_onprem.ini does nothing. Restarting liagentd doesn’t change any of your manual changes (in my case the ssl_ca_path variable).

What does work though, is changing the /var/lib/loginsight-agent/liagent.ini file directly. Restarting liagentd after adding my enterprise ca chain to ssl_ca_path makes liagent accept my enterprise ca signed certificate.

So it seems to be a bug in the vRNI CLI for “log-insight set …” or in the code for the liagent itself that creates an “onprem” ini file as well.